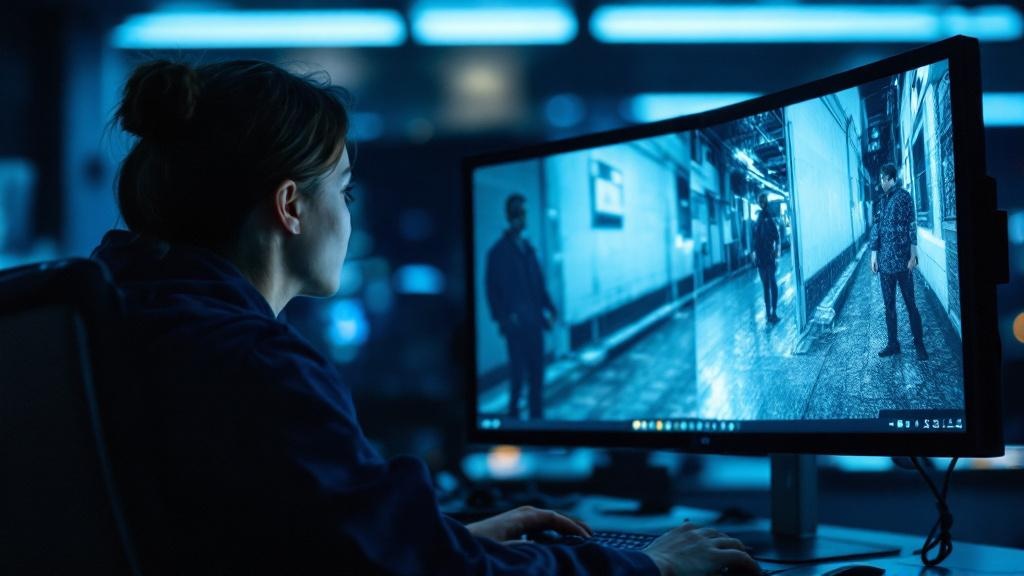

Why Fake Videos Look Deliberately Awful

Hany Farid, professor of computer science at the University of California, Berkeley, and founder of deepfake detection company GetReal Security, identifies this as a primary red flag. His analysis is directly applicable to the European information environment, where synthetic media has already been used to manipulate elections and spread health misinformation.

"It's one of the first things we look at. If I'm trying to fool people, what do I do? I generate my fake video, then I reduce the resolution so you can still see it, but you can't make out all the little details. And then I add compression that further obfuscates any possible artefacts."

Even advanced AI video generators such as Google's Veo and OpenAI's Sora still produce subtle inconsistencies. These might include unnaturally smooth skin textures, oddly shifting hair patterns, or background objects that move in impossible ways. When video quality is deliberately degraded, these telltale signs become much harder to spot.

Within the EU, this matters enormously. The European Commission's High-Level Expert Group on Artificial Intelligence has long flagged synthetic media as a systemic risk to democratic discourse. Věra Jourová, the Commission's former Vice-President for Values and Transparency, stated publicly in 2023 that AI-generated disinformation represented "one of the most urgent threats to free and fair elections in Europe." That warning has only grown more relevant as the tools have become cheaper and more accessible.

The Anatomy of AI Video Deception

Matthew Stamm, who heads the Multimedia and Information Security Lab at Drexel University, urges caution about over-applying the rule. "If you see something that's really low quality that doesn't mean it's fake. It doesn't mean anything nefarious."

However, when combined with other factors, poor quality becomes a powerful indicator. Viral clips of improbable human moments, whether strangers falling in love on public transport or implausible acts of heroism, have repeatedly shared three characteristics: they are short, blurry, and heavily compressed. European fact-checking organisations including Full Fact in the UK and Correctiv in Germany have documented dozens of such cases.

- Most AI videos are surprisingly brief, often just six to ten seconds long, because generating longer content is expensive and increases the likelihood of visible errors.

- Resolution is deliberately reduced to hide pixel-level inconsistencies that would be obvious in high-definition footage.

- Heavy compression creates blocky patterns and blurred edges that mask AI artefacts.

- Multiple short AI clips are often stitched together, with cuts every few seconds that experienced viewers can learn to spot.

The Technical Arms Race

This cat-and-mouse game between AI creators and detection experts represents what Stamm calls "the greatest information security challenge of the 21st century." Technology companies are investing billions in making AI video more realistic, whilst researchers develop increasingly sophisticated detection methods.

The challenge extends beyond simple visual cues. Advanced detection now relies on statistical analysis, looking for digital fingerprints invisible to human eyes. Technology companies are exploring embedding verification data directly into video files at creation, similar to digital watermarks. The Coalition for Content Provenance and Authenticity, which counts BBC, Adobe, and the European Broadcasting Union among its members, is developing exactly this kind of provenance infrastructure.

The numbers paint a sobering picture:

- Visual quality assessment currently achieves 70 to 80 per cent accuracy, but that effectiveness is declining rapidly as AI output improves.

- Length analysis sits at 85 to 90 per cent accuracy but is expected to remain useful for only two to three years.

- Statistical fingerprinting achieves 60 to 70 per cent and is considered long-term viable.

- Provenance tracking stands at 40 to 50 per cent today but is regarded by most researchers as the most promising long-term solution.

Researchers at ETH Zurich, one of Europe's leading technical universities, are actively working on watermarking frameworks that could be mandated under future revisions to the EU AI Act. The Act already requires providers of AI systems used to generate synthetic audio-visual content to ensure outputs are marked in a machine-readable format, though enforcement mechanisms remain nascent.

Rethinking How We Evaluate Visual Content

Digital literacy expert Mike Caulfield argues that constantly chasing new AI detection methods is ultimately futile. Instead, we need to fundamentally change how we evaluate online content.

Videos and images are becoming like text, where origin and verification matter more than surface appearance. The crucial questions are not about pixels and compression. They are about provenance: where did this content originate, who posted it, what is the context, and has a trustworthy source verified it?

This shift requires developing new habits around content consumption. Just as we would not believe written text simply because it appears on a screen, we can no longer trust visual content based solely on what our eyes tell us. The UK's Online Safety Act places duties on platforms to address synthetic media harms, and Ofcom's accompanying codes of practice are beginning to shape how verification tools must be surfaced to users.

Practical Guidance for European Users

How long will the poor quality rule remain useful? Experts predict this visual cue will become unreliable within two to three years as AI video quality improves dramatically. The next generation of tools will produce high-definition content indistinguishable from authentic footage.

What should you look for besides video quality? Focus on length (most AI videos are under fifteen seconds), context (does the content seem too convenient or dramatic), and source verification (can you trace the video's origin to a credible outlet or first-hand account).

Are AI video detection tools reliable? Current tools achieve 60 to 80 per cent accuracy under ideal conditions, but effectiveness drops significantly with compressed or degraded content. They are helpful but should not be your only verification method.

How do you verify suspicious video content? Check multiple sources, reverse image search key frames using tools such as Google Lens or InVID, examine the poster's credibility and posting history, and look for corroborating reports from established news outlets. When in doubt, do not share.

The battle against AI deception will require a combination of technological solutions, policy interventions, and fundamental changes in how we consume digital content. As detection methods become more sophisticated, so too will the methods used to circumvent them. The future of online video lies not in our ability to spot fakes with the naked eye, but in our willingness to demand transparency and verification from content creators and platforms alike.

Comments

Sign in to join the conversation. Be civil, be specific, link your sources.